OLS vs Logistic Scatterplots

Logistic regression models (also known as logit models) provide one approach to analyzing binary response variables. The goal in logistic regression is to model Pr(y=1 | x) = F(xβ). To do this we will make use of the logit transformation.

.

.

Let odds = P/(1-P)  .

.

Let log odds or logit g(x) = ln(P/(1-P))

= xβ. In the case of simple logistic regression,

i.e., with a single predictor,

g(x) = β0 + β1x.

Thus, π(x) can be written

= xβ. In the case of simple logistic regression,

i.e., with a single predictor,

g(x) = β0 + β1x.

Thus, π(x) can be written

This can be shown to be true since exp(xβ)  are just the odds,

are just the odds,

The coefficients for logistic regression are estimated using maximum likelihood. Unlike least squares regression, in which, the coefficients can be estimated in a single pass, the coefficients for logistic regression are estimated through an iterative procedure. This is because the effects in OLS regression are linear while logistic regression the solutions are nonlinear in β0 and β1 The goal is to find the coefficients that make the data most likely. This is done by maximizing the likelihood function,

Thus, the odds would be exp(xb) = exp(β0 + β1x)

which can be

rewritten as exp(β0)exp(β1x).

If we increase x by one we get exp(β0 + β1(x+1)) = exp(β0 + β1x+β1)

which, in turn, can be rewritten as exp(β0)exp(β1x)exp(β1).

Next, to compare the odds before and after adding one to x, we compute the odds ratio,

exp(β0)exp(β1x)exp(β1)

---------------------------------- = exp(β1),

exp(β0)exp(β1x)

that is, the odds ratio for a one unit change is just the exponentiated log odds coefficient.

Before we begin estimating some logit models let's play with the grlog command (findit grlog) to see how changes in the constant and logistic regression coefficient affect the predicted probabilities. Now let's begin with some very simple examples.

Intercept Only Example

use http://www.philender.com/courses/data/honors, clear

describe

Contains data from http://www.gseis.ucla.edu/courses/data/honors.dta

obs: 200

vars: 7 10 Feb 2001 16:27

size: 6,400 (99.8% of memory free)

-------------------------------------------------------------------------------

1. id float %9.0g

2. female float %9.0g fl

3. ses float %9.0g sl

4. lang float %9.0g language test score

5. math float %9.0g math score

6. science float %9.0g science score

7. honors float %9.0g

-------------------------------------------------------------------------------

summarize

Variable | Obs Mean Std. Dev. Min Max

---------+-----------------------------------------------------

id | 200 100.5 57.87918 1 200

female | 200 .545 .4992205 0 1

ses | 200 2.055 .7242914 1 3

lang | 200 52.23 10.25294 28 76

math | 200 52.645 9.368448 33 75

science | 200 51.85 9.900891 26 74

honors | 200 .265 .4424407 0 1

tabulate honors

-> tabulation of honors

honors | Freq. Percent Cum.

------------+-----------------------------------

0 | 147 73.50 73.50

1 | 53 26.50 100.00

------------+-----------------------------------

Total | 200 100.00

logit honors

Logit estimates Number of obs = 200

LR chi2(0) = -0.00

Prob > chi2 = .

Log likelihood = -115.64441 Pseudo R2 = -0.0000

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_cons | -1.020141 .1602206 -6.37 0.000 -1.334167 -.706114

------------------------------------------------------------------------------

display ln(.265/(1-.265))

-1.0201407

predict pr0

list id hon pr0 in 1/10

+---------------------+

| id honors pr0 |

|---------------------|

1. | 8 0 .265 |

2. | 67 0 .265 |

3. | 165 0 .265 |

4. | 173 1 .265 |

5. | 135 1 .265 |

|---------------------|

6. | 5 0 .265 |

7. | 89 0 .265 |

8. | 46 0 .265 |

9. | 111 0 .265 |

10. | 19 0 .265 |

|---------------------|

predict xb, xb

list id honors xb in 1/10

+--------------------------+

| id honors xb |

|--------------------------|

1. | 8 0 -1.020141 |

2. | 67 0 -1.020141 |

3. | 165 0 -1.020141 |

4. | 173 1 -1.020141 |

5. | 135 1 -1.020141 |

|--------------------------|

6. | 5 0 -1.020141 |

7. | 89 0 -1.020141 |

8. | 46 0 -1.020141 |

9. | 111 0 -1.020141 |

10. | 19 0 -1.020141 |

|--------------------------|

/* generate probabilities manually */

generate prm = exp(xb)/(1+exp(xb))

list id honors pr0 prm in 1/10

+----------------------------+

| id honors pr0 prm |

|----------------------------|

1. | 8 0 .265 .265 |

2. | 67 0 .265 .265 |

3. | 165 0 .265 .265 |

4. | 173 1 .265 .265 |

5. | 135 1 .265 .265 |

|----------------------------|

6. | 5 0 .265 .265 |

7. | 89 0 .265 .265 |

8. | 46 0 .265 .265 |

9. | 111 0 .265 .265 |

10. | 19 0 .265 .265 |

+----------------------------+

Dichotomous Predictor Example

codebook female

-------------------------------------------------------------------------------------------------

female (unlabeled)

-------------------------------------------------------------------------------------------------

type: numeric (float)

label: fl

range: [0,1] units: 1

unique values: 2 missing .: 0/200

tabulation: Freq. Numeric Label

91 0 male

109 1 female

tabulate honors female, cell nofreq

| female

honors | male female | Total

-----------+----------------------+----------

0 | 36.50 37.00 | 73.50

1 | 9.00 17.50 | 26.50

-----------+----------------------+----------

Total | 45.50 54.50 | 100.00

display 36.5*17.5/(37*9)

1.9181682

logit honors female

Logit estimates Number of obs = 200

LR chi2(1) = 3.94

Prob > chi2 = 0.0473

Log likelihood = -113.6769 Pseudo R2 = 0.0170

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

female | .6513707 .3336752 1.95 0.051 -.0026207 1.305362

_cons | -1.400088 .2631619 -5.32 0.000 -1.915876 -.8842998

------------------------------------------------------------------------------

logit, or

Logit estimates Number of obs = 200

LR chi2(1) = 3.94

Prob > chi2 = 0.0473

Log likelihood = -113.6769 Pseudo R2 = 0.0170

------------------------------------------------------------------------------

honors | Odds Ratio Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

female | 1.918168 .6400451 1.95 0.051 .9973827 3.689024

------------------------------------------------------------------------------

/* predicted probability for males */

display exp(-1.400088+0*.6513707)/(1+exp(-1.400088+0*.6513707))

.19780215

/* predicted probability for females */

display exp(-1.400088+1*.6513707)/(1+exp(-1.400088+1*.6513707))

.32110086

predict pr1

tablist female pr1 /* findit tablist */

+--------------------------+

| female pr1 Freq |

|--------------------------|

| female .3211009 109 |

| male .1978022 91 |

+--------------------------+

tabstat honors, by(female)

Summary for variables: honors

by categories of: female

female | mean

-------+----------

male | .1978022

female | .3211009

-------+----------

Total | .265

------------------

display ln(.1978022/(1-.1978022))

-1.4000877

display ln((.3211009/(1-.3211009))/(.1978022/(1-.1978022)))

.65137056

display (.3211009/(1-.3211009))/(.1978022/(1-.1978022))

1.918168

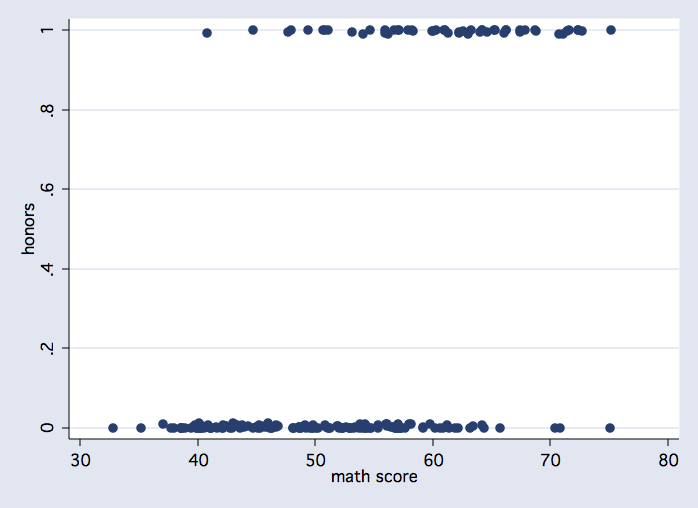

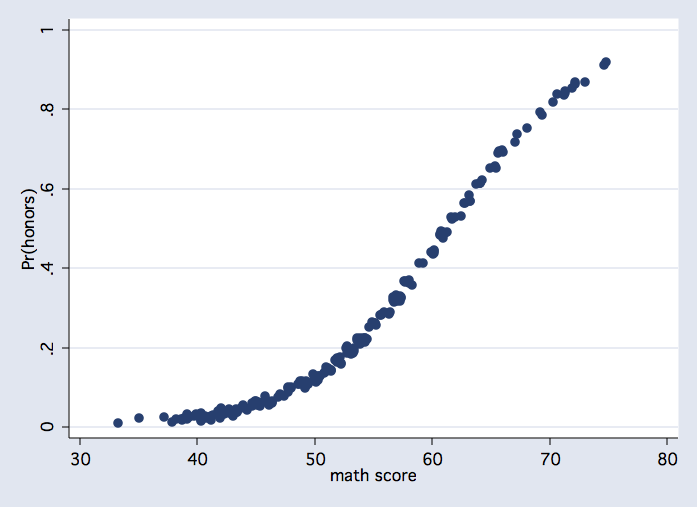

Continuous Predictor Example

correlate honors math

(obs=200)

| honors math

-------------+------------------

honors | 1.0000

math | 0.5417 1.0000

scatter honors math, jitter(2)

logit honors math

Logit estimates Number of obs = 200

LR chi2(1) = 65.27

Prob > chi2 = 0.0000

Log likelihood = -83.008708 Pseudo R2 = 0.2822

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

math | .1715239 .0268555 6.39 0.000 .118888 .2241597

_cons | -10.51268 1.545072 -6.80 0.000 -13.54097 -7.484394

------------------------------------------------------------------------------

logit, or

Logit estimates Number of obs = 200

LR chi2(1) = 65.27

Prob > chi2 = 0.0000

Log likelihood = -83.008708 Pseudo R2 = 0.2822

------------------------------------------------------------------------------

honors | Odds Ratio Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

math | 1.187112 .0318805 6.39 0.000 1.126244 1.251271

------------------------------------------------------------------------------

/* predicted probability for math = 50 */

display exp(-10.51268+50*.1715239)/(1+exp(-10.51268+50*.1715239 ))

.12603452

predict pr2

tablist math pr2, sort(v)

+------------------------+

| math pr2 Freq |

|------------------------|

| 33 .0077492 1 |

| 35 .0108859 1 |

| 37 .0152727 1 |

| 38 .0180788 2 |

| 39 .0213892 6 |

|------------------------|

| 40 .0252901 10 |

| 41 .0298808 7 |

| 42 .0352747 7 |

| 43 .0416004 7 |

| 44 .049003 4 |

|------------------------|

| 45 .0576435 8 |

| 46 .0676991 8 |

| 47 .0793612 3 |

| 48 .0928321 5 |

| 49 .1083207 10 |

|------------------------|

| 50 .1260343 7 |

| 51 .1461699 8 |

| 52 .1689006 6 |

| 53 .1943615 7 |

| 54 .2226324 10 |

|------------------------|

| 55 .2537204 5 |

| 56 .2875437 7 |

| 57 .3239189 13 |

| 58 .3625541 6 |

| 59 .4030502 2 |

|------------------------|

| 60 .4449125 5 |

| 61 .4875715 7 |

| 62 .5304123 4 |

| 63 .5728096 5 |

| 64 .6141635 5 |

|------------------------|

| 65 .6539327 3 |

| 66 .6916608 4 |

| 67 .7269929 2 |

| 68 .7596831 1 |

| 69 .7895918 2 |

|------------------------|

| 70 .8166765 1 |

| 71 .8409768 4 |

| 72 .8625981 3 |

| 73 .8816931 1 |

| 75 .9130621 2 |

+------------------------+

scatter pr2 math, jitter(2)

logit honors math

Logit estimates Number of obs = 200

LR chi2(1) = 65.27

Prob > chi2 = 0.0000

Log likelihood = -83.008708 Pseudo R2 = 0.2822

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

math | .1715239 .0268555 6.39 0.000 .118888 .2241597

_cons | -10.51268 1.545072 -6.80 0.000 -13.54097 -7.484394

------------------------------------------------------------------------------

logit, or

Logit estimates Number of obs = 200

LR chi2(1) = 65.27

Prob > chi2 = 0.0000

Log likelihood = -83.008708 Pseudo R2 = 0.2822

------------------------------------------------------------------------------

honors | Odds Ratio Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

math | 1.187112 .0318805 6.39 0.000 1.126244 1.251271

------------------------------------------------------------------------------

/* predicted probability for math = 50 */

display exp(-10.51268+50*.1715239)/(1+exp(-10.51268+50*.1715239 ))

.12603452

predict pr2

tablist math pr2, sort(v)

+------------------------+

| math pr2 Freq |

|------------------------|

| 33 .0077492 1 |

| 35 .0108859 1 |

| 37 .0152727 1 |

| 38 .0180788 2 |

| 39 .0213892 6 |

|------------------------|

| 40 .0252901 10 |

| 41 .0298808 7 |

| 42 .0352747 7 |

| 43 .0416004 7 |

| 44 .049003 4 |

|------------------------|

| 45 .0576435 8 |

| 46 .0676991 8 |

| 47 .0793612 3 |

| 48 .0928321 5 |

| 49 .1083207 10 |

|------------------------|

| 50 .1260343 7 |

| 51 .1461699 8 |

| 52 .1689006 6 |

| 53 .1943615 7 |

| 54 .2226324 10 |

|------------------------|

| 55 .2537204 5 |

| 56 .2875437 7 |

| 57 .3239189 13 |

| 58 .3625541 6 |

| 59 .4030502 2 |

|------------------------|

| 60 .4449125 5 |

| 61 .4875715 7 |

| 62 .5304123 4 |

| 63 .5728096 5 |

| 64 .6141635 5 |

|------------------------|

| 65 .6539327 3 |

| 66 .6916608 4 |

| 67 .7269929 2 |

| 68 .7596831 1 |

| 69 .7895918 2 |

|------------------------|

| 70 .8166765 1 |

| 71 .8409768 4 |

| 72 .8625981 3 |

| 73 .8816931 1 |

| 75 .9130621 2 |

+------------------------+

scatter pr2 math, jitter(2)

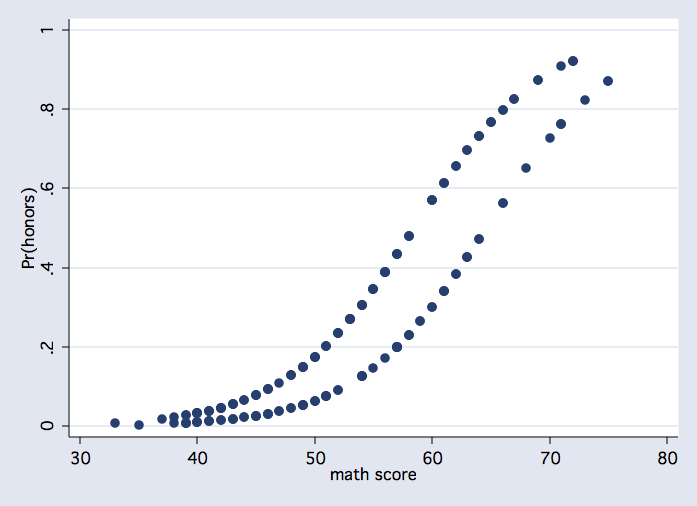

Dichotmous & Continuous Predictors

logit honors female math

Logit estimates Number of obs = 200

LR chi2(2) = 72.83

Prob > chi2 = 0.0000

Log likelihood = -79.23169 Pseudo R2 = 0.3149

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

female | 1.120847 .4240297 2.64 0.008 .2897644 1.95193

math | .182538 .0284206 6.42 0.000 .1268347 .2382413

_cons | -11.79228 1.718901 -6.86 0.000 -15.16127 -8.423297

------------------------------------------------------------------------------

logit, or

Logit estimates Number of obs = 200

LR chi2(2) = 72.83

Prob > chi2 = 0.0000

Log likelihood = -79.23169 Pseudo R2 = 0.3149

------------------------------------------------------------------------------

honors | Odds Ratio Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

female | 3.067452 1.300691 2.64 0.008 1.336113 7.042269

math | 1.20026 .0341121 6.42 0.000 1.135229 1.269015

------------------------------------------------------------------------------

predict pr3

scatter pr3 math

/* estimated probabilities only for values observed in the sample */

table math female, cont(mean pr3)

------------------------------

math | female

score | male female

----------+-------------------

33 | .0094928

35 | .0044808

37 | .0195023

38 | .0077227 .0233167

39 | .0092549 .0278561

40 | .0110878 .033249

41 | .0132787 .0396435

42 | .0158956 .0472077

43 | .0190184 .0561309

44 | .0227404 .0666227

45 | .0271706 .0789118

46 | .0324353 .0932411

47 | .0386795 .1098622

48 | .0460686 .1290245

49 | .0547889 .1509623

50 | .0650472 .1758769

51 | .0770696 .2039158

52 | .0910975 .2351493

53 | .269547

54 | .1261727 .3069571

55 | .1477078 .3470921

56 | .1721942 .3895253

57 | .1997884 .4337002

58 | .2305728 .4789544

59 | .2645327

60 | .3015341 .5697531

61 | .3413092 .6138161

62 | .3834506 .6560904

63 | .427419 .6960288

64 | .4725647 .733215

65 | .7673725

66 | .563462 .7983593

67 | .8261538

68 | .6502869

69 | .8725489

70 | .7281737

71 | .7627678 .907942

72 | .9221051

73 | .8224434

75 | .8696725

------------------------------

/* predicted probabilities for males for math 33 to 75 */

margins, at(female=0 math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at |

1 | .0031146 .0025359 1.23 0.219 -.0018556 .0080849

2 | .003736 .002943 1.27 0.204 -.0020321 .0095042

3 | .0044808 .0034116 1.31 0.189 -.0022059 .0111676

4 | .0053734 .0039502 1.36 0.174 -.002369 .0131157

5 | .0064425 .004568 1.41 0.158 -.0025107 .0153957

6 | .0077227 .0052752 1.46 0.143 -.0026165 .0180619

7 | .0092549 .0060829 1.52 0.128 -.0026674 .0211772

8 | .0110878 .0070032 1.58 0.113 -.0026383 .0248138

9 | .0132787 .008049 1.65 0.099 -.002497 .0290544

10 | .0158956 .0092339 1.72 0.085 -.0022024 .0339937

11 | .0190184 .0105722 1.80 0.072 -.0017028 .0397395

12 | .0227404 .0120787 1.88 0.060 -.0009335 .0464142

13 | .0271706 .0137682 1.97 0.048 .0001855 .0541557

14 | .0324353 .0156553 2.07 0.038 .0017515 .0631191

15 | .0386795 .0177542 2.18 0.029 .003882 .0734771

16 | .0460686 .0200781 2.29 0.022 .0067163 .085421

17 | .0547889 .0226392 2.42 0.016 .0104169 .0991609

18 | .0650472 .025448 2.56 0.011 .0151699 .1149244

19 | .0770696 .0285138 2.70 0.007 .0211836 .1329556

20 | .0910975 .031844 2.86 0.004 .0286844 .1535107

21 | .1073817 .0354449 3.03 0.002 .0379109 .1768525

22 | .1261727 .0393212 3.21 0.001 .0491046 .2032407

23 | .1477078 .0434749 3.40 0.001 .0624987 .232917

24 | .1721942 .0479037 3.59 0.000 .0783047 .2660838

25 | .1997884 .0525966 3.80 0.000 .0967009 .3028759

26 | .2305729 .0575269 4.01 0.000 .1178223 .3433234

27 | .2645327 .0626431 4.22 0.000 .1417545 .3873108

28 | .3015341 .0678593 4.44 0.000 .1685323 .4345358

29 | .3413092 .0730471 4.67 0.000 .1981394 .4844789

30 | .3834506 .0780334 4.91 0.000 .2305079 .5363933

31 | .427419 .082607 5.17 0.000 .2655122 .5893257

32 | .4725647 .0865352 5.46 0.000 .3029588 .6421705

33 | .5181636 .0895888 5.78 0.000 .3425727 .6937545

34 | .563462 .0915711 6.15 0.000 .3839858 .7429381

35 | .6077257 .0923439 6.58 0.000 .426735 .7887164

36 | .6502868 .0918459 7.08 0.000 .4702722 .8303014

37 | .6905813 .090099 7.66 0.000 .5139904 .8671722

38 | .7281737 .0872028 8.35 0.000 .5572593 .899088

39 | .7627678 .0833182 9.15 0.000 .5994672 .9260684

40 | .7942035 .0786462 10.10 0.000 .6400598 .9483473

41 | .8224434 .0734054 11.20 0.000 .6785715 .9663153

42 | .8475519 .0678112 12.50 0.000 .7146443 .9804595

43 | .8696725 .062061 14.01 0.000 .7480351 .9913099

------------------------------------------------------------------------------

/* predicted probabilities for females for math 33 to 75 */

margins, at(female=1 math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at |

1 | .0094928 .0064778 1.47 0.143 -.0032035 .0221891

2 | .0113722 .0074494 1.53 0.127 -.0032282 .0259727

3 | .0136186 .008549 1.59 0.111 -.0031372 .0303744

4 | .0163014 .0097889 1.67 0.096 -.0028845 .0354873

5 | .0195023 .0111807 1.74 0.081 -.0024115 .041416

6 | .0233167 .0127353 1.83 0.067 -.0016439 .0482774

7 | .0278561 .0144619 1.93 0.054 -.0004887 .0562008

8 | .033249 .0163675 2.03 0.042 .0011694 .0653287

9 | .0396435 .0184554 2.15 0.032 .0034716 .0758154

10 | .0472077 .0207245 2.28 0.023 .0065884 .087827

11 | .0561309 .0231681 2.42 0.015 .0107223 .1015394

12 | .0666227 .0257726 2.59 0.010 .0161093 .1171362

13 | .0789118 .0285176 2.77 0.006 .0230183 .1348052

14 | .0932411 .0313752 2.97 0.003 .0317468 .1547355

15 | .1098622 .0343119 3.20 0.001 .0426121 .1771123

16 | .1290245 .0372907 3.46 0.001 .0559361 .2021128

17 | .1509623 .0402752 3.75 0.000 .0720242 .2299003

18 | .1758769 .0432356 4.07 0.000 .0911366 .2606172

19 | .2039158 .0461535 4.42 0.000 .1134567 .2943749

20 | .2351493 .0490259 4.80 0.000 .1390603 .3312384

21 | .2695471 .0518651 5.20 0.000 .1678933 .3712008

22 | .3069571 .0546905 5.61 0.000 .1997657 .4141486

23 | .3470921 .0575137 6.03 0.000 .2343673 .4598169

24 | .3895253 .0603187 6.46 0.000 .2713028 .5077479

25 | .4337002 .0630448 6.88 0.000 .3101346 .5572659

26 | .4789544 .0655796 7.30 0.000 .3504208 .6074881

27 | .5245567 .0677677 7.74 0.000 .3917344 .6573791

28 | .5697531 .0694344 8.21 0.000 .4336642 .705842

29 | .6138161 .0704157 8.72 0.000 .475804 .7518283

30 | .6560904 .0705877 9.29 0.000 .5177411 .7944397

31 | .6960287 .0698864 9.96 0.000 .5590539 .8330036

32 | .733215 .068315 10.73 0.000 .59932 .8671099

33 | .7673725 .0659387 11.64 0.000 .6381349 .89661

34 | .7983594 .0628717 12.70 0.000 .6751332 .9215855

35 | .8261538 .0592585 13.94 0.000 .7100092 .9422983

36 | .8508328 .0552568 15.40 0.000 .7425315 .959134

37 | .8725489 .0510211 17.10 0.000 .7725493 .9725484

38 | .8915066 .0466923 19.09 0.000 .7999914 .9830218

39 | .907942 .0423899 21.42 0.000 .8248592 .9910247

40 | .9221052 .0382099 24.13 0.000 .8472151 .9969952

41 | .9342471 .0342239 27.30 0.000 .8671694 1.001325

42 | .9446101 .0304818 30.99 0.000 .8848668 1.004353

43 | .9534213 .0270142 35.29 0.000 .9004744 1.006368

------------------------------------------------------------------------------

/* using factor variables */

logit honors i.female math

Iteration 0: log likelihood = -115.64441

Iteration 1: log likelihood = -82.342272

Iteration 2: log likelihood = -79.276145

Iteration 3: log likelihood = -79.231754

Iteration 4: log likelihood = -79.23169

Iteration 5: log likelihood = -79.23169

Logistic regression Number of obs = 200

LR chi2(2) = 72.83

Prob > chi2 = 0.0000

Log likelihood = -79.23169 Pseudo R2 = 0.3149

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

1.female | 1.120847 .4240394 2.64 0.008 .2897454 1.951949

math | .182538 .0284217 6.42 0.000 .1268324 .2382435

_cons | -11.79228 1.718976 -6.86 0.000 -15.16141 -8.423151

------------------------------------------------------------------------------

margins female, at(math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at#female |

1 0 | .0031146 .0025359 1.23 0.219 -.0018556 .0080849

1 1 | .0094928 .0064778 1.47 0.143 -.0032035 .0221891

2 0 | .003736 .002943 1.27 0.204 -.0020321 .0095042

2 1 | .0113722 .0074494 1.53 0.127 -.0032282 .0259727

3 0 | .0044808 .0034116 1.31 0.189 -.0022059 .0111676

3 1 | .0136186 .008549 1.59 0.111 -.0031372 .0303744

4 0 | .0053734 .0039502 1.36 0.174 -.002369 .0131157

4 1 | .0163014 .0097889 1.67 0.096 -.0028845 .0354873

5 0 | .0064425 .004568 1.41 0.158 -.0025107 .0153957

5 1 | .0195023 .0111807 1.74 0.081 -.0024115 .041416

6 0 | .0077227 .0052752 1.46 0.143 -.0026165 .0180619

6 1 | .0233167 .0127353 1.83 0.067 -.0016439 .0482774

7 0 | .0092549 .0060829 1.52 0.128 -.0026674 .0211772

7 1 | .0278561 .0144619 1.93 0.054 -.0004887 .0562008

8 0 | .0110878 .0070032 1.58 0.113 -.0026383 .0248138

8 1 | .033249 .0163675 2.03 0.042 .0011694 .0653287

9 0 | .0132787 .008049 1.65 0.099 -.002497 .0290544

9 1 | .0396435 .0184554 2.15 0.032 .0034716 .0758154

10 0 | .0158956 .0092339 1.72 0.085 -.0022024 .0339937

10 1 | .0472077 .0207245 2.28 0.023 .0065884 .087827

11 0 | .0190184 .0105722 1.80 0.072 -.0017028 .0397395

11 1 | .0561309 .0231681 2.42 0.015 .0107223 .1015394

12 0 | .0227404 .0120787 1.88 0.060 -.0009335 .0464142

12 1 | .0666227 .0257726 2.59 0.010 .0161093 .1171362

13 0 | .0271706 .0137682 1.97 0.048 .0001855 .0541557

13 1 | .0789118 .0285176 2.77 0.006 .0230183 .1348052

14 0 | .0324353 .0156553 2.07 0.038 .0017515 .0631191

14 1 | .0932411 .0313752 2.97 0.003 .0317468 .1547355

15 0 | .0386795 .0177542 2.18 0.029 .003882 .0734771

15 1 | .1098622 .0343119 3.20 0.001 .0426121 .1771123

16 0 | .0460686 .0200781 2.29 0.022 .0067163 .085421

16 1 | .1290245 .0372907 3.46 0.001 .0559361 .2021128

17 0 | .0547889 .0226392 2.42 0.016 .0104169 .0991609

17 1 | .1509623 .0402752 3.75 0.000 .0720242 .2299003

18 0 | .0650472 .025448 2.56 0.011 .0151699 .1149244

18 1 | .1758769 .0432356 4.07 0.000 .0911366 .2606172

19 0 | .0770696 .0285138 2.70 0.007 .0211836 .1329556

19 1 | .2039158 .0461535 4.42 0.000 .1134567 .2943749

20 0 | .0910975 .031844 2.86 0.004 .0286844 .1535107

20 1 | .2351493 .0490259 4.80 0.000 .1390603 .3312384

21 0 | .1073817 .0354449 3.03 0.002 .0379109 .1768525

21 1 | .2695471 .0518651 5.20 0.000 .1678933 .3712008

22 0 | .1261727 .0393212 3.21 0.001 .0491046 .2032407

22 1 | .3069571 .0546905 5.61 0.000 .1997657 .4141486

23 0 | .1477078 .0434749 3.40 0.001 .0624987 .232917

23 1 | .3470921 .0575137 6.03 0.000 .2343673 .4598169

24 0 | .1721942 .0479037 3.59 0.000 .0783047 .2660838

24 1 | .3895253 .0603187 6.46 0.000 .2713028 .5077479

25 0 | .1997884 .0525966 3.80 0.000 .0967009 .3028759

25 1 | .4337002 .0630448 6.88 0.000 .3101346 .5572659

26 0 | .2305729 .0575269 4.01 0.000 .1178223 .3433234

26 1 | .4789544 .0655796 7.30 0.000 .3504208 .6074881

27 0 | .2645327 .0626431 4.22 0.000 .1417545 .3873108

27 1 | .5245567 .0677677 7.74 0.000 .3917344 .6573791

28 0 | .3015341 .0678593 4.44 0.000 .1685323 .4345358

28 1 | .5697531 .0694344 8.21 0.000 .4336642 .705842

29 0 | .3413092 .0730471 4.67 0.000 .1981394 .4844789

29 1 | .6138161 .0704157 8.72 0.000 .475804 .7518283

30 0 | .3834506 .0780334 4.91 0.000 .2305079 .5363933

30 1 | .6560904 .0705877 9.29 0.000 .5177411 .7944397

31 0 | .427419 .082607 5.17 0.000 .2655122 .5893257

31 1 | .6960287 .0698864 9.96 0.000 .5590539 .8330036

32 0 | .4725647 .0865352 5.46 0.000 .3029588 .6421705

32 1 | .733215 .068315 10.73 0.000 .59932 .8671099

33 0 | .5181636 .0895888 5.78 0.000 .3425727 .6937545

33 1 | .7673725 .0659387 11.64 0.000 .6381349 .89661

34 0 | .563462 .0915711 6.15 0.000 .3839858 .7429381

34 1 | .7983594 .0628717 12.70 0.000 .6751332 .9215855

35 0 | .6077257 .0923439 6.58 0.000 .426735 .7887164

35 1 | .8261538 .0592585 13.94 0.000 .7100092 .9422983

36 0 | .6502868 .0918459 7.08 0.000 .4702722 .8303014

36 1 | .8508328 .0552568 15.40 0.000 .7425315 .959134

37 0 | .6905813 .090099 7.66 0.000 .5139904 .8671722

37 1 | .8725489 .0510211 17.10 0.000 .7725493 .9725484

38 0 | .7281737 .0872028 8.35 0.000 .5572593 .899088

38 1 | .8915066 .0466923 19.09 0.000 .7999914 .9830218

39 0 | .7627678 .0833182 9.15 0.000 .5994672 .9260684

39 1 | .907942 .0423899 21.42 0.000 .8248592 .9910247

40 0 | .7942035 .0786462 10.10 0.000 .6400598 .9483473

40 1 | .9221052 .0382099 24.13 0.000 .8472151 .9969952

41 0 | .8224434 .0734054 11.20 0.000 .6785715 .9663153

41 1 | .9342471 .0342239 27.30 0.000 .8671694 1.001325

42 0 | .8475519 .0678112 12.50 0.000 .7146443 .9804595

42 1 | .9446101 .0304818 30.99 0.000 .8848668 1.004353

43 0 | .8696725 .062061 14.01 0.000 .7480351 .9913099

43 1 | .9534213 .0270142 35.29 0.000 .9004744 1.006368

------------------------------------------------------------------------------

/* estimated probabilities only for values observed in the sample */

table math female, cont(mean pr3)

------------------------------

math | female

score | male female

----------+-------------------

33 | .0094928

35 | .0044808

37 | .0195023

38 | .0077227 .0233167

39 | .0092549 .0278561

40 | .0110878 .033249

41 | .0132787 .0396435

42 | .0158956 .0472077

43 | .0190184 .0561309

44 | .0227404 .0666227

45 | .0271706 .0789118

46 | .0324353 .0932411

47 | .0386795 .1098622

48 | .0460686 .1290245

49 | .0547889 .1509623

50 | .0650472 .1758769

51 | .0770696 .2039158

52 | .0910975 .2351493

53 | .269547

54 | .1261727 .3069571

55 | .1477078 .3470921

56 | .1721942 .3895253

57 | .1997884 .4337002

58 | .2305728 .4789544

59 | .2645327

60 | .3015341 .5697531

61 | .3413092 .6138161

62 | .3834506 .6560904

63 | .427419 .6960288

64 | .4725647 .733215

65 | .7673725

66 | .563462 .7983593

67 | .8261538

68 | .6502869

69 | .8725489

70 | .7281737

71 | .7627678 .907942

72 | .9221051

73 | .8224434

75 | .8696725

------------------------------

/* predicted probabilities for males for math 33 to 75 */

margins, at(female=0 math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at |

1 | .0031146 .0025359 1.23 0.219 -.0018556 .0080849

2 | .003736 .002943 1.27 0.204 -.0020321 .0095042

3 | .0044808 .0034116 1.31 0.189 -.0022059 .0111676

4 | .0053734 .0039502 1.36 0.174 -.002369 .0131157

5 | .0064425 .004568 1.41 0.158 -.0025107 .0153957

6 | .0077227 .0052752 1.46 0.143 -.0026165 .0180619

7 | .0092549 .0060829 1.52 0.128 -.0026674 .0211772

8 | .0110878 .0070032 1.58 0.113 -.0026383 .0248138

9 | .0132787 .008049 1.65 0.099 -.002497 .0290544

10 | .0158956 .0092339 1.72 0.085 -.0022024 .0339937

11 | .0190184 .0105722 1.80 0.072 -.0017028 .0397395

12 | .0227404 .0120787 1.88 0.060 -.0009335 .0464142

13 | .0271706 .0137682 1.97 0.048 .0001855 .0541557

14 | .0324353 .0156553 2.07 0.038 .0017515 .0631191

15 | .0386795 .0177542 2.18 0.029 .003882 .0734771

16 | .0460686 .0200781 2.29 0.022 .0067163 .085421

17 | .0547889 .0226392 2.42 0.016 .0104169 .0991609

18 | .0650472 .025448 2.56 0.011 .0151699 .1149244

19 | .0770696 .0285138 2.70 0.007 .0211836 .1329556

20 | .0910975 .031844 2.86 0.004 .0286844 .1535107

21 | .1073817 .0354449 3.03 0.002 .0379109 .1768525

22 | .1261727 .0393212 3.21 0.001 .0491046 .2032407

23 | .1477078 .0434749 3.40 0.001 .0624987 .232917

24 | .1721942 .0479037 3.59 0.000 .0783047 .2660838

25 | .1997884 .0525966 3.80 0.000 .0967009 .3028759

26 | .2305729 .0575269 4.01 0.000 .1178223 .3433234

27 | .2645327 .0626431 4.22 0.000 .1417545 .3873108

28 | .3015341 .0678593 4.44 0.000 .1685323 .4345358

29 | .3413092 .0730471 4.67 0.000 .1981394 .4844789

30 | .3834506 .0780334 4.91 0.000 .2305079 .5363933

31 | .427419 .082607 5.17 0.000 .2655122 .5893257

32 | .4725647 .0865352 5.46 0.000 .3029588 .6421705

33 | .5181636 .0895888 5.78 0.000 .3425727 .6937545

34 | .563462 .0915711 6.15 0.000 .3839858 .7429381

35 | .6077257 .0923439 6.58 0.000 .426735 .7887164

36 | .6502868 .0918459 7.08 0.000 .4702722 .8303014

37 | .6905813 .090099 7.66 0.000 .5139904 .8671722

38 | .7281737 .0872028 8.35 0.000 .5572593 .899088

39 | .7627678 .0833182 9.15 0.000 .5994672 .9260684

40 | .7942035 .0786462 10.10 0.000 .6400598 .9483473

41 | .8224434 .0734054 11.20 0.000 .6785715 .9663153

42 | .8475519 .0678112 12.50 0.000 .7146443 .9804595

43 | .8696725 .062061 14.01 0.000 .7480351 .9913099

------------------------------------------------------------------------------

/* predicted probabilities for females for math 33 to 75 */

margins, at(female=1 math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at |

1 | .0094928 .0064778 1.47 0.143 -.0032035 .0221891

2 | .0113722 .0074494 1.53 0.127 -.0032282 .0259727

3 | .0136186 .008549 1.59 0.111 -.0031372 .0303744

4 | .0163014 .0097889 1.67 0.096 -.0028845 .0354873

5 | .0195023 .0111807 1.74 0.081 -.0024115 .041416

6 | .0233167 .0127353 1.83 0.067 -.0016439 .0482774

7 | .0278561 .0144619 1.93 0.054 -.0004887 .0562008

8 | .033249 .0163675 2.03 0.042 .0011694 .0653287

9 | .0396435 .0184554 2.15 0.032 .0034716 .0758154

10 | .0472077 .0207245 2.28 0.023 .0065884 .087827

11 | .0561309 .0231681 2.42 0.015 .0107223 .1015394

12 | .0666227 .0257726 2.59 0.010 .0161093 .1171362

13 | .0789118 .0285176 2.77 0.006 .0230183 .1348052

14 | .0932411 .0313752 2.97 0.003 .0317468 .1547355

15 | .1098622 .0343119 3.20 0.001 .0426121 .1771123

16 | .1290245 .0372907 3.46 0.001 .0559361 .2021128

17 | .1509623 .0402752 3.75 0.000 .0720242 .2299003

18 | .1758769 .0432356 4.07 0.000 .0911366 .2606172

19 | .2039158 .0461535 4.42 0.000 .1134567 .2943749

20 | .2351493 .0490259 4.80 0.000 .1390603 .3312384

21 | .2695471 .0518651 5.20 0.000 .1678933 .3712008

22 | .3069571 .0546905 5.61 0.000 .1997657 .4141486

23 | .3470921 .0575137 6.03 0.000 .2343673 .4598169

24 | .3895253 .0603187 6.46 0.000 .2713028 .5077479

25 | .4337002 .0630448 6.88 0.000 .3101346 .5572659

26 | .4789544 .0655796 7.30 0.000 .3504208 .6074881

27 | .5245567 .0677677 7.74 0.000 .3917344 .6573791

28 | .5697531 .0694344 8.21 0.000 .4336642 .705842

29 | .6138161 .0704157 8.72 0.000 .475804 .7518283

30 | .6560904 .0705877 9.29 0.000 .5177411 .7944397

31 | .6960287 .0698864 9.96 0.000 .5590539 .8330036

32 | .733215 .068315 10.73 0.000 .59932 .8671099

33 | .7673725 .0659387 11.64 0.000 .6381349 .89661

34 | .7983594 .0628717 12.70 0.000 .6751332 .9215855

35 | .8261538 .0592585 13.94 0.000 .7100092 .9422983

36 | .8508328 .0552568 15.40 0.000 .7425315 .959134

37 | .8725489 .0510211 17.10 0.000 .7725493 .9725484

38 | .8915066 .0466923 19.09 0.000 .7999914 .9830218

39 | .907942 .0423899 21.42 0.000 .8248592 .9910247

40 | .9221052 .0382099 24.13 0.000 .8472151 .9969952

41 | .9342471 .0342239 27.30 0.000 .8671694 1.001325

42 | .9446101 .0304818 30.99 0.000 .8848668 1.004353

43 | .9534213 .0270142 35.29 0.000 .9004744 1.006368

------------------------------------------------------------------------------

/* using factor variables */

logit honors i.female math

Iteration 0: log likelihood = -115.64441

Iteration 1: log likelihood = -82.342272

Iteration 2: log likelihood = -79.276145

Iteration 3: log likelihood = -79.231754

Iteration 4: log likelihood = -79.23169

Iteration 5: log likelihood = -79.23169

Logistic regression Number of obs = 200

LR chi2(2) = 72.83

Prob > chi2 = 0.0000

Log likelihood = -79.23169 Pseudo R2 = 0.3149

------------------------------------------------------------------------------

honors | Coef. Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

1.female | 1.120847 .4240394 2.64 0.008 .2897454 1.951949

math | .182538 .0284217 6.42 0.000 .1268324 .2382435

_cons | -11.79228 1.718976 -6.86 0.000 -15.16141 -8.423151

------------------------------------------------------------------------------

margins female, at(math=(33(1)75)) noatlegend

Adjusted predictions Number of obs = 200

Model VCE : OIM

Expression : Pr(honors), predict()

------------------------------------------------------------------------------

| Delta-method

| Margin Std. Err. z P>|z| [95% Conf. Interval]

-------------+----------------------------------------------------------------

_at#female |

1 0 | .0031146 .0025359 1.23 0.219 -.0018556 .0080849

1 1 | .0094928 .0064778 1.47 0.143 -.0032035 .0221891

2 0 | .003736 .002943 1.27 0.204 -.0020321 .0095042

2 1 | .0113722 .0074494 1.53 0.127 -.0032282 .0259727

3 0 | .0044808 .0034116 1.31 0.189 -.0022059 .0111676

3 1 | .0136186 .008549 1.59 0.111 -.0031372 .0303744

4 0 | .0053734 .0039502 1.36 0.174 -.002369 .0131157

4 1 | .0163014 .0097889 1.67 0.096 -.0028845 .0354873

5 0 | .0064425 .004568 1.41 0.158 -.0025107 .0153957

5 1 | .0195023 .0111807 1.74 0.081 -.0024115 .041416

6 0 | .0077227 .0052752 1.46 0.143 -.0026165 .0180619

6 1 | .0233167 .0127353 1.83 0.067 -.0016439 .0482774

7 0 | .0092549 .0060829 1.52 0.128 -.0026674 .0211772

7 1 | .0278561 .0144619 1.93 0.054 -.0004887 .0562008

8 0 | .0110878 .0070032 1.58 0.113 -.0026383 .0248138

8 1 | .033249 .0163675 2.03 0.042 .0011694 .0653287

9 0 | .0132787 .008049 1.65 0.099 -.002497 .0290544

9 1 | .0396435 .0184554 2.15 0.032 .0034716 .0758154

10 0 | .0158956 .0092339 1.72 0.085 -.0022024 .0339937

10 1 | .0472077 .0207245 2.28 0.023 .0065884 .087827

11 0 | .0190184 .0105722 1.80 0.072 -.0017028 .0397395

11 1 | .0561309 .0231681 2.42 0.015 .0107223 .1015394

12 0 | .0227404 .0120787 1.88 0.060 -.0009335 .0464142

12 1 | .0666227 .0257726 2.59 0.010 .0161093 .1171362

13 0 | .0271706 .0137682 1.97 0.048 .0001855 .0541557

13 1 | .0789118 .0285176 2.77 0.006 .0230183 .1348052

14 0 | .0324353 .0156553 2.07 0.038 .0017515 .0631191

14 1 | .0932411 .0313752 2.97 0.003 .0317468 .1547355

15 0 | .0386795 .0177542 2.18 0.029 .003882 .0734771

15 1 | .1098622 .0343119 3.20 0.001 .0426121 .1771123

16 0 | .0460686 .0200781 2.29 0.022 .0067163 .085421

16 1 | .1290245 .0372907 3.46 0.001 .0559361 .2021128

17 0 | .0547889 .0226392 2.42 0.016 .0104169 .0991609

17 1 | .1509623 .0402752 3.75 0.000 .0720242 .2299003

18 0 | .0650472 .025448 2.56 0.011 .0151699 .1149244

18 1 | .1758769 .0432356 4.07 0.000 .0911366 .2606172

19 0 | .0770696 .0285138 2.70 0.007 .0211836 .1329556

19 1 | .2039158 .0461535 4.42 0.000 .1134567 .2943749

20 0 | .0910975 .031844 2.86 0.004 .0286844 .1535107

20 1 | .2351493 .0490259 4.80 0.000 .1390603 .3312384

21 0 | .1073817 .0354449 3.03 0.002 .0379109 .1768525

21 1 | .2695471 .0518651 5.20 0.000 .1678933 .3712008

22 0 | .1261727 .0393212 3.21 0.001 .0491046 .2032407

22 1 | .3069571 .0546905 5.61 0.000 .1997657 .4141486

23 0 | .1477078 .0434749 3.40 0.001 .0624987 .232917

23 1 | .3470921 .0575137 6.03 0.000 .2343673 .4598169

24 0 | .1721942 .0479037 3.59 0.000 .0783047 .2660838

24 1 | .3895253 .0603187 6.46 0.000 .2713028 .5077479

25 0 | .1997884 .0525966 3.80 0.000 .0967009 .3028759

25 1 | .4337002 .0630448 6.88 0.000 .3101346 .5572659

26 0 | .2305729 .0575269 4.01 0.000 .1178223 .3433234

26 1 | .4789544 .0655796 7.30 0.000 .3504208 .6074881

27 0 | .2645327 .0626431 4.22 0.000 .1417545 .3873108

27 1 | .5245567 .0677677 7.74 0.000 .3917344 .6573791

28 0 | .3015341 .0678593 4.44 0.000 .1685323 .4345358

28 1 | .5697531 .0694344 8.21 0.000 .4336642 .705842

29 0 | .3413092 .0730471 4.67 0.000 .1981394 .4844789

29 1 | .6138161 .0704157 8.72 0.000 .475804 .7518283

30 0 | .3834506 .0780334 4.91 0.000 .2305079 .5363933

30 1 | .6560904 .0705877 9.29 0.000 .5177411 .7944397

31 0 | .427419 .082607 5.17 0.000 .2655122 .5893257

31 1 | .6960287 .0698864 9.96 0.000 .5590539 .8330036

32 0 | .4725647 .0865352 5.46 0.000 .3029588 .6421705

32 1 | .733215 .068315 10.73 0.000 .59932 .8671099

33 0 | .5181636 .0895888 5.78 0.000 .3425727 .6937545

33 1 | .7673725 .0659387 11.64 0.000 .6381349 .89661

34 0 | .563462 .0915711 6.15 0.000 .3839858 .7429381

34 1 | .7983594 .0628717 12.70 0.000 .6751332 .9215855

35 0 | .6077257 .0923439 6.58 0.000 .426735 .7887164

35 1 | .8261538 .0592585 13.94 0.000 .7100092 .9422983

36 0 | .6502868 .0918459 7.08 0.000 .4702722 .8303014

36 1 | .8508328 .0552568 15.40 0.000 .7425315 .959134

37 0 | .6905813 .090099 7.66 0.000 .5139904 .8671722

37 1 | .8725489 .0510211 17.10 0.000 .7725493 .9725484

38 0 | .7281737 .0872028 8.35 0.000 .5572593 .899088

38 1 | .8915066 .0466923 19.09 0.000 .7999914 .9830218

39 0 | .7627678 .0833182 9.15 0.000 .5994672 .9260684

39 1 | .907942 .0423899 21.42 0.000 .8248592 .9910247

40 0 | .7942035 .0786462 10.10 0.000 .6400598 .9483473

40 1 | .9221052 .0382099 24.13 0.000 .8472151 .9969952

41 0 | .8224434 .0734054 11.20 0.000 .6785715 .9663153

41 1 | .9342471 .0342239 27.30 0.000 .8671694 1.001325

42 0 | .8475519 .0678112 12.50 0.000 .7146443 .9804595

42 1 | .9446101 .0304818 30.99 0.000 .8848668 1.004353

43 0 | .8696725 .062061 14.01 0.000 .7480351 .9913099

43 1 | .9534213 .0270142 35.29 0.000 .9004744 1.006368

------------------------------------------------------------------------------

Categorical Data Analysis Course

Phil Ender